Use AI. Don’t Become AI: Applying the Fortson Framework to Ensure Authentic Learning

The following is a white paper for teachers and administrators working in high schools/teaching Humanities courses at the secondary level. A presentation of this work is being delivered at EARCOS in March, 2026. Download a PDF of the full white paper HERE: Use AI. Don’t Become AI: Applying the Fortson Framework to Ensure Authentic Learning FULL TEXT PDF

Introduction

The tech crowd and their audience cry out, “If the test is something AI can do, then maybe we need to give students a better test.” Unfortunately, the best test of an ability to write is to have a student write. The r-score between task and summative in this case is 1. This is the very task AI is taking from many students. But writing, research, and critical thinking are not skills that will evaporate just because of the existence of AI.

AI Large Language Models have changed the paradigm under which teaching and learning occur: authorship authenticity, Project-Based Learning’s feasibility, and assessment validity are increasingly uncertain. Incidence of cheating rapidly increased around the world due to this technological marvel (Westfall, 2023) (Bulduk et al., 2023), and many teachers were left without guidance regarding how to approach AI in the classroom (Eaton, 2023, 4). When tools capable of producing fluent, well-structured texts are now widely accessible to students without institutional oversight and without clear instructional guidance, how can we ensure ethical AI usage from students? When students care more about grades than their education, how can we ensure authentic learning? Students who lack intrinsic motivation, or who don’t fully comprehend the shortcomings of AI, are likely to trust AI answers without critically thinking or reviewing the AI output for validity (as evidenced in cases which students turn in work with sentences such as “as a large language model” and other errors still embedded within the text). Even though such students have completely outsourced thinking and learning, becoming just “AI” themselves, plagiarists are likely to misinterpret their copy-pasted efforts for actual learning (Chance, et al., 2011). This is a problem, as the data shows that students who use AI often exhibit lower cognitive processing (Kosmyna et al., 2025).

Institutional responses to this shift have taken two paths: one emphasized detection and restriction despite research indicating that these approaches are unreliable, inconsistently accurate, and prone to equity concerns (particularly for ESL students) (Chaudhry et al., 2023); the other emphasized adoption and utilization despite the research to its benefits being self-reported by student surveys (Çerikini et al., 2025) and prone to cognitive offloading (particularly in writing tasks) (Kosmyna et al., 2025)—in fact, many who promote AI assume students will only use it to enhance learning without deficits, claiming that this modern dilemma mirrors the old debate about calculators (Newcomer, 2025). But this is a misinformed metaphor: AI is not a calculator. Importantly, neither side fully addresses the underlying instructional question facing educators: how should learning be designed when high-quality automated text generation is readily available?

This white paper argues that the challenge posed by AI is not primarily technological or disciplinary, but pedagogical. Project-Based Learning is intended to be a process, not a product. When learning is evaluated primarily through final products, AI can obscure evidence of student thinking rather than enhance it. PBL should no longer be counted for a summative grade—after all, it’s called Project-Based Learning, not Project-Based Assessment. This separation aligns assessment with instructional purpose rather than convenience and addresses concerns raised in recent research on AI’s impact on cognition and learning (Kosmyna et al., 2025) while also addressing student anxiety concerns on formative assessments and fears of plagiarism accusations (Klimova & Pikhart, 2025).

In contrast, when learning is designed around process, includes clear reflection (Lew & Schmidt, 2011), and clearly bounded separate assessment conditions, AI can be incorporated without undermining authentic learning. Assessments should now only occur without technology and under direct supervision (unless the skill being assessed is directly tied to AI usage, such as an assessment in which students gauge the accuracy of AI in summarizing an article, or are given a prompt-engineering task).

The purpose of this paper is to explain a process-based instructional framework that responds to these conditions. Drawing on existing research in AI use, cognitive engagement, and reflective learning, The Fortson Framework proposes a shift from output-based evaluation to process-based instructional design that ensures students can demonstrate knowledge and articulate their reasoning in four domains:

| Question | Skill learned |

| Why did an author accept stylistic changes from AI? | How to apply AI for rhetorical analysis. |

| Why did an author accept grammatical changes from AI? | How to Apply AI as an editor while maintaining a learning process. |

| Why did an author accept information/sources from AI? | How to apply OPVL to AI. |

| Why did the AI create this response? | How to view AI as a medium on which to apply critical thinking and analysis in the same manner students do for other media |

This paper is written for teachers and administrators and focuses on classroom-level practice rather than enforcement or policy compliance.

The Instructional Problem in the Age of AI

Imagine a man in a box who does not know Chinese. A Chinese man approaches the box and asks him a question in Chinese. The man inside is given a set of Chinese characters which he cannot pronounce and does not know the meaning of with which he can use to reply to the man outside. He is given instructions in English telling him which tiles to use, but no explanation of the definitions. Has any meaningful communication occurred between the man inside the box and the man outside the box? Can the man inside the box be said to know Chinese? This thought experiment from John Searle applies even more in the age of AI.

Generative AI is now a routine component of students’ workflows whether teachers want it to be or not. Its presence challenges the assumption that work completed outside the classroom represents independent thinking or is merely, as in John Searle’s thought experiment, a simulacrum of real thought and communication.

Attempts to address this tension through detection have proven insufficient. Research examining teachers’ ability to identify AI-generated writing demonstrates wide variability in accuracy and confidence, with a substantial risk of false positives and inconsistent judgments (Chaudhry, et al., 2023). While it has been proven that teachers can be trained to better detect AI (Fortson, 2023), the extent to which students are using AI is too large for teachers to spend time playing detective. Even when detection is successful, it offers little insight into whether learning occurred during the task itself. Detection focuses on identifying artifacts after submission rather than examining the cognitive processes that produced them.

Recent work on human–AI interaction in writing tasks further complicates the instructional landscape. Studies suggest that the use of large language models can reduce immediate cognitive load when compared to fully independent writing (Kosmyna et al., 2025). While reduced effort may increase efficiency, it may also contribute to what has been described as cognitive debt: a long-term reduction in engagement with complex reasoning and idea formation. These are the students who themselves have become AI in outsourcing their thinking completely to the AI models without comprehending the content they produced. These findings do not imply that AI should be excluded from instruction, but they demonstrate the need for learning designs that preserve meaningful cognitive engagement.

Taken together, this body of research suggests that the central instructional challenge is a misalignment between traditional task design with PBL and contemporary tools.

From Output Policing to Learning Design

Framing AI primarily as a problem to be detected positions teachers as monitors rather than designers of learning. Detection-centered approaches rely on tools and judgments with known limitations, scale poorly across subjects and contexts, and often erode relationships between teachers and students. Most critically, they fail to answer the two questions that matter most in education today:

- What process can now ensure student engagement with their learning?

- What assessment can demonstrate that students have learned?

A process-based approach reframes AI as a constraint that instructional planning must account for. Instead of attempting to infer learning from polished, and potentially AI-manipulated outputs, this approach emphasizes the visibility of thinking through process, revision, and reflection in non-scored formative assessments (the scores should not count in their report card grade) in which the AI output itself is often a source of analysis by the student, and technology-free summative assessments.

Research on metacognition supports this shift. Studies examining self-reflection and academic performance indicate that structured reflection is associated with deeper understanding and stronger learning outcomes (Lew & Schmidt, 2011). However, when using AI as a mirror, this means the student should also be the starting point; a mirror reflects, it does not create: The student should not “ask AI for ideas or a rough draft” and then fix it up. A student should always be the starting point for any writing assignment or thinking task, and then—if AI is necessary to be used—AI may be used to make suggestions upon which a student may reflect. AI does not always need to be used. If AI is a starting point, it must always be as far removed from the students’ output goals as possible; we will see examples in which a student is analyzing language, so the AI is providing images. If someone wanted to reverse the process for an art class, the student would provide images, and the AI would provide writing. This will be explored and explained more in the following sections.

The Fortson Framework emerges from this instructional logic. It does not attempt to eliminate AI from classrooms, nor does it assume that AI use is inherently beneficial or harmful. Instead, it establishes clear design principles that distinguish learning from assessment and preserves the conditions under which skill development can be verified in four domains regarding writing: style, grammar, research, and analysis (critical thinking/media literacy—AI is not just a tool, but also a new medium that is increasingly viewed in online spaces, so it is essential to treat its output with the same lenses of media literacy and critical theory applied to other media formats).

The Fortson Framework: Instructional Design Principles for the AI-era Instruction

The Fortson Framework is a process-based instructional model designed for classrooms in which generative AI is readily available. It is not a technology policy or a behavioral code. Rather, it is a learning design framework that clarifies how AI may be used during instruction and how learning is evaluated under different conditions.

At the center of the framework is the principle that AI is a tool, not a product. Imagine you are building a house. You might use a hammer, but you don’t live in the hammer. A hammer is a tool, not a house. You do not stop at the step of “holding a hammer”. Similarly, teachers and students do not stop with a “correct” answer from AI, but discuss how it arrived at its result and why we agree (or disagree) with it. While AI may provide feedback, examples, or alternative perspectives, students remain responsible for generating ideas, making decisions, and justifying their work. Authorship is therefore defined not simply as text production, but as ownership of reasoning and choice.

The framework prioritizes process over product. Final products retain instructional value, but they are interpreted alongside evidence of the thinking that produced them. In contexts where AI can generate high-quality outputs with minimal effort, products alone no longer provide reliable evidence of learning. Process (planning, revision, and reflection) becomes the primary indicator of cognitive engagement.

Reflection is mandatory whenever AI is used. Structured reflection makes cognitive work visible, documents how tools influenced decisions, and provides teachers with defensible evidence of engagement.

The framework draws a clear boundary between learning activities and assessment. AI may be integrated into learning tasks when its role is defined and reflection is required. Assessment of core academic skills, however, occurs without technological assistance unless the learning objective is explicitly technological in scope. This distinction preserves assessment validity while allowing AI to support instruction.

Instruction designed under the Fortson Framework begins with purpose. Tasks are designed so that reasoning, decision-making, and revision are required and observable. Student agency is preserved, but bounded. Students may use AI during learning, but they must account for its influence through structured reflection. AI is used during the learning process as scaffolding according to Vygotsky’s principles of a Zone of Proximal Development, but then AI is removed for the assessment.

Reflection is specific, structured, and tied directly to learning objectives. Students are asked to explain what they accepted, what they rejected, and why.

Collaboration and inquiry are encouraged, but not conflated with assessment. This distinction becomes especially important in AI-rich learning environments.

The Fortson Framework in utilizing AI—Stylistic Changes

- Don’t allow more than 20% AI-written content

- Require 1st, 2nd, and 3rd drafts for written work

- Require a reflection in which students explain any stylistic changes they accepted from AI, and why they preferred the response to their original version, explaining connotation and synonym preference, rhetorical devices used (parallelism, etc). This should be explained orally without the use of AI, though students may look at the paper they wrote (with or without AI help) for reference.

Imagine a chef cooking a meal. Someone adds a pinch of sugar to his tomato sauce. Upon tasting it, the chef realizes the food tastes better. But a learned chef, being able to demonstrate his knowledge of cooking, is able to articulate why: the sweetness from the sugar helped cut the acidity of the tomato. He knows the sugar will not make the sauce taste sweet, despite sugar being sweet. The learned chef, even though he did not add the sugar himself, is demonstrating knowledge of cooking. This learned chef also knows that he might also add sugar to an acidic salad dressing for the same effect. Furthermore, the learned chef knows that because it is the sweetness of the sugar, not the name “sugar” that matters, he might substitute honey or another similarly sweet object to obtain the same effect. This is similar to what students will do when asking AI to improve their writing.

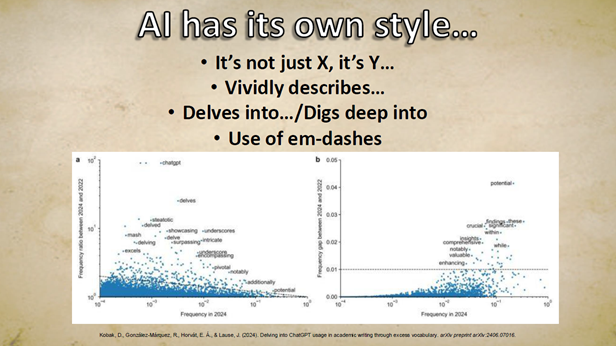

Every writer has their own style, and all too often people assume that AI has “improved their writing”. Students might write a rough draft and feed it into AI and say the writing is “better”, but they might not know why. Sometimes, AI does not improve writing, but merely makes it different according to its own style. Hemingway and Faulkner famously hated each other’s writing styles, but both are revered as writers. Far too often, students and adults alike assume that ChatGPT is making the best choice for their writing. Academic papers have increasingly used “ChatGPT-isms”, aping the diction of AI. Increasing use of “It’s not X, It’s Y” format, “delve into/dive into” phraseology, “vividly” as an adjective, and the frequent use of em-dash (much to my chagrin—as a lover of fragments and the em-dash that grammatically allowed them).

Are these the best choices to use for specific writing? Whenever running their writing through AI to “improve it”, students should ask these questions:

- What type of stylistic choices does AI make or change from the original writing?

- If the sentence is better, what specifically made the sentence better?

If a student accepts writing generated from an AI, they should be able to explain to you what suggestions they took from AI, and why they felt it was an improvement over their initial writing. For example:

- A student writes: AI affects education by changing how students write because it reduces the effort, and teachers are frustrated. Student work isn’t finished, but they pretend the tool they used is a finished thing.

- AI corrects: AI affects education by changing how students write, reducing student effort, and frustrating teachers. Students’ pretend the AI output, the tool they used, is the finished product they created. This is akin to buying a hammer and claiming it is a house, instead of using the hammer to build the house.

- A student explains: “I chose the AI version because it used parallel structure so that each verb used the present participle form with -ing endings, while my original version mixed verb forms. I liked that AI used a metaphor to paint a picture of my idea using tools the audience might be familiar with. The better flow of my writing, and the metaphors to create an emotional response, helped provide pathos which influenced my reader to accept my idea as correct.

In this case, the student is now like our hypothetical learned chef that knows why he accepts suggestions from outside help. In this case, the student is demonstrating knowledge even if they did not directly write the suggestions from the AI sentence (specifically, showing knowledge of parallel structure, figurative language, and pathos as an element of persuasive writing). The student is engaged in rhetorical analysis.

Tracking changes between drafts, with explanations between them, helps ensure students provide reasons for, and demonstrate knowledge of why, their acceptance of AI suggestions. The allowable amount of AI, as a number from AI detectors, should never be more than 20%, as this is the number allowed by most universities, and Turnitin’s own guides state that AI scores from 1-19% may be inaccurate (TurnItIn).

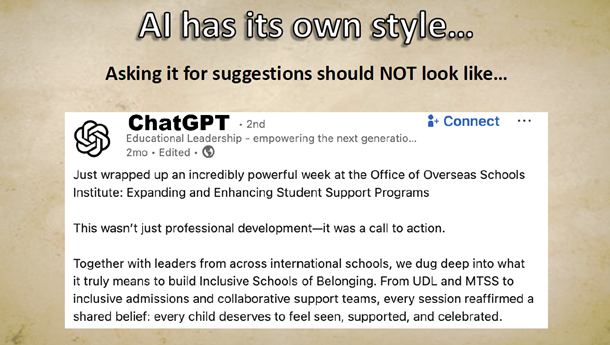

Using AI should not look like the sample below, which has little thought to the reasons for the diction AI suggests:

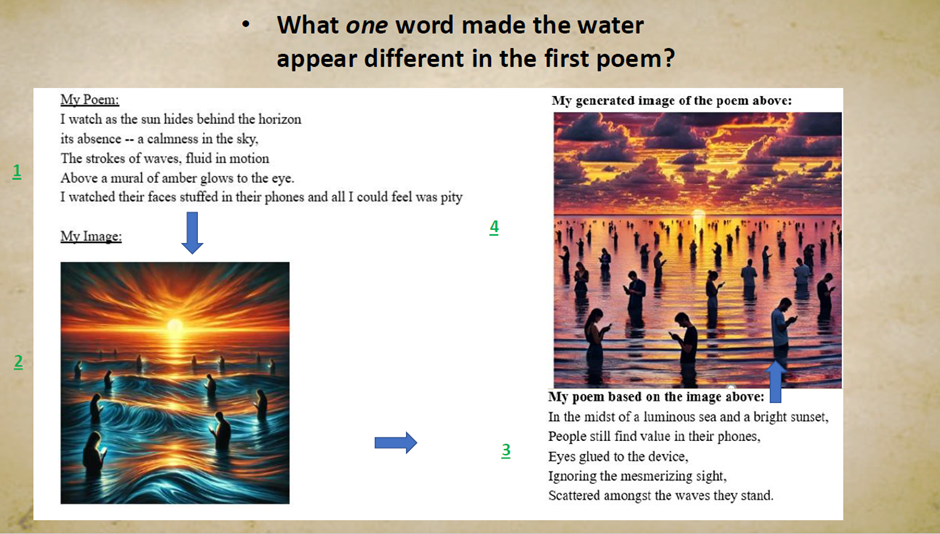

Rather, it should look more like the following example, taken from an assignment for my AP Literature class for poetry analysis: students wrote a poem (poem 1) and fed it into AI to generate an image (image 1). They then gave the AI-image (image 1) to a classmate, who wrote a poem (poem 2) based on that image as a work of ekphrastic poetry, and then had the AI make a new image (image 2) based on poem 2.

Students then reflected on why the AI generated different images for each poem. A student asked me, “Why do the waves in the first image have lines that look less real than the second image”. I asked them, “What word do you think may have made this change?” They read it again, and answered “Strokes, because maybe it’s thinking that it’s a metaphor for a painting, because I also said it was a mural, and I said the waves were fluid, so it’s showing the path of the waves.” This is a key skill of rhetorical analysis that is still using AI in the education process.

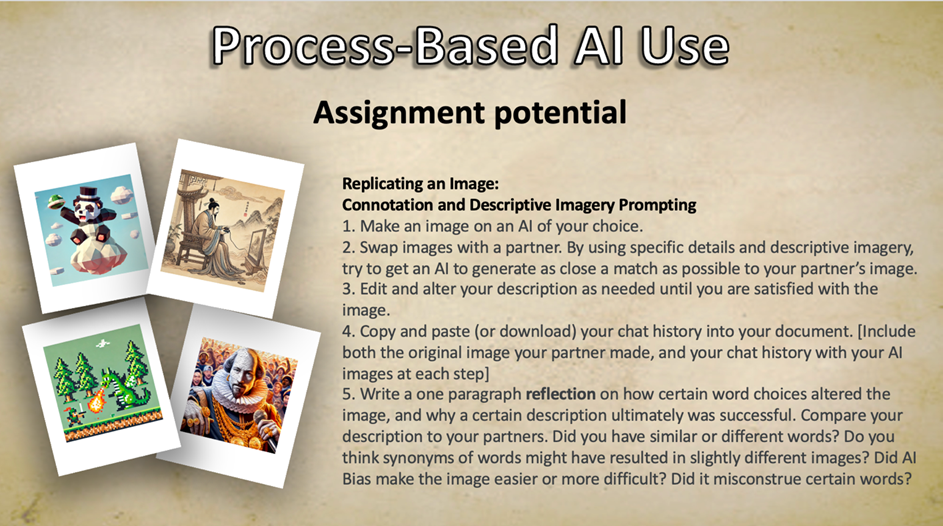

Formative Assessment example: Replicating an Image for Connotation and Descriptive Imagery Prompting

1. Make an image on an AI of your choice.

2. Swap images with a partner. By using specific details and descriptive imagery, try to get an AI to generate as close a match as possible to your partner’s image.

3. Edit and alter your description as needed until you are satisfied with the image.

4. Copy and paste (or download) your chat history into your document. [Include both the original image your partner made, and your chat history with your AI images at each step]

5. Write a one paragraph reflection on how certain word choices altered the image, and why a certain description ultimately was successful. Compare your description to your partners. Did you have similar or different words? Do you think synonyms of words might have resulted in slightly different images? Did AI Bias make the image easier or more difficult? Did it misconstrue certain words?

What if a student can’t articulate their answers?: If a student is suspected of having completely taken work from AI, or if they cannot properly articulate the stylistic elements in their writing, or explain their answer correctly, their learning project should not be accepted—they should do another draft. Re-teaching may be required. It need not be framed as a disciplinary issue or that they have cheated. Framing it as an issue of “this reflection demonstrates that there are some knowledge gaps that need to be addressed before the summative assessment” prevents fights with students or parents over plagiarism issues. If a student is not able to do a task and is only relying on AI-assistance, it will be revealed in the summative assessment.

Summative Assessments: Writing-based assessments should occur after multiple in class writing assignments in which stylistic choices have been discussed. Assessments should be in class and proctored. If they occur on computers, the computers should be “locked” to allow only the assessment program itself to be accessed. Alternatively, they can be done as hand-written assignments. These assessments do not require AI-justification reflections, only a stylistic/rhetorical devices reflection, and can be judged on the quality of the writing itself. An example of such an assessment might be: in a 90-minute block period, write a five-paragraph essay persuading someone that both Animal Farm by George Orwell and The Crucible by Arthur Miller contain the same theme [this assessment assumes students read both these works in a class]. Include a reflection at the end of your paper explaining how you used the following: parallel structure and/or repetition, persuasive techniques (ethos, pathos, logos), metaphor and/or simile, and why these improved your writing for the required task.

Example of use for art-focused classes: This could be used for art classes, in which a student creates an artwork, and then asks the AI to analyze it for meaning. The student can see if their intended meaning is picked up by the AI. If it is not, they can reflect on what additions may need to be made to their artwork, or if they feel the AI is missing information. They can compare the AI’s interpretation of their work with classmates interpretations, as the AI should never be a lone definitive answer in a voiceless vacuum.

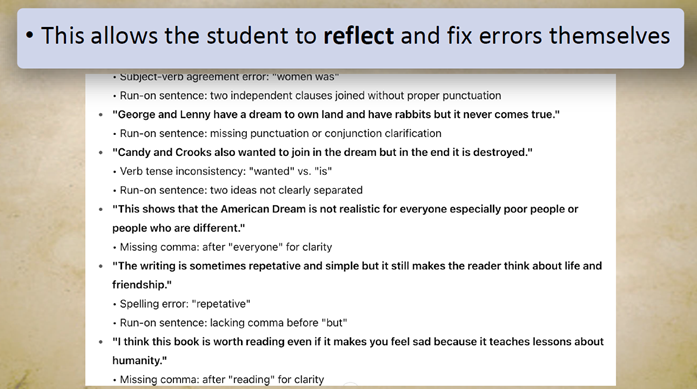

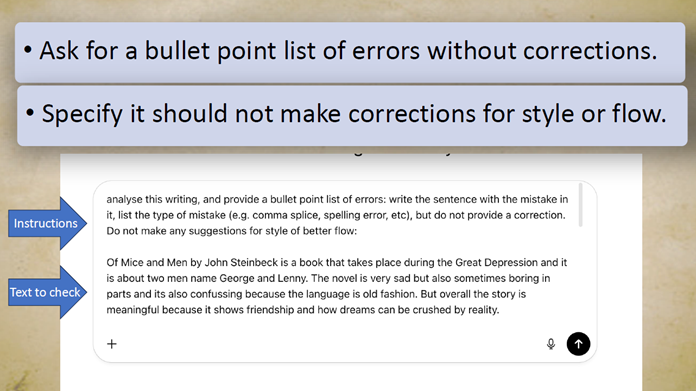

The Fortson Framework in utilizing AI—Grammatical Changes

Learning is a series of failures accompanied by a series of corrections moving toward, and yet never reaching, perfection. We expect mistakes, but only when we are made aware of the presence of a mistake can it be corrected. This is what AI currently takes from us—like a beauty filter on a social media post, it blinds us to our flaws. A student is now like Ovid’s tragic Narcissus: gazing into a digital pond reflecting digital perfection, never truly “knowing himself”. This tragic student is able to ask AI to improve their writing without seeing what flaws it originally contained. Old spell-checking processes on Word Processors would directly show students where a mistake had been made, and offer suggestions from which a student could choose like a real-time MCQ. Even older spell-checking came in the form of a red pen circling and underlining errors. It is essential a student not have their grammar fixed for them, but only to have the errors highlighted for them, so that they can be made aware and fix them on their own. To do this:

- AI should be given a prompt similar to this: For the following text, provide a bullet point list of mistakes with a prompt similar to this: analyze this writing, and provide a bullet point list of mistakes (e.g., comma splice, spelling error, etc), but do not provide a correction. Do not make any suggestions for style or flow.

- Students will then input the text they want the AI to analyze.

- AI will provide a list of errors, indicate which sentence has the error and the type of error it is (e.g., comma splice, verb tense confusion, spelling error).

- Students will fix the errors, explain the types of errors they were making, why they initially made the error and why the correction they applied is accurate, and a goal to reduce the errors in the future (e.g., if they made many comma splices, they might write “I will check for subject, verb, and objects in my writing before my commas, and if they are present I will either use a period or put an and after my commas).

What if a student can’t articulate their answers? If a student is suspected of having completely taken work from AI, or if they cannot properly articulate the stylistic elements in their writing, their learning project should not be accepted, and they should do another draft. Re-teaching may be required. It need not be framed as a disciplinary issue or that they have cheated. Framing it as an issue of “this reflection demonstrates that there are some knowledge gaps that need to be addressed before the summative assessment” prevents fights with students or parents over plagiarism issues. If a student is not able to do a task and is only relying on AI assistance, it will be revealed in the summative assessment.

Assessments: Writing-based assessments should occur after multiple in class writing assignments in which proper grammar has been demonstrated. Assessments should be in class and proctored. If they occur on computers, the computers should be “locked” to allow only the assessment program itself to be accessed. Alternatively, they can be done as hand-written assignments. These assessments do not require reflection and can be judged on the quality of the writing itself. Alternately, they can correct grammar mistakes in writing examples which are provided to the student.

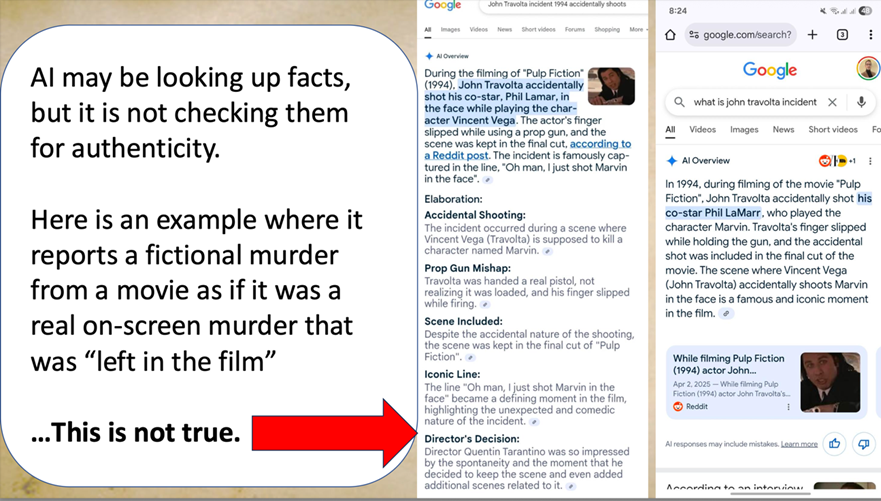

The Fortson Framework in utilizing AI—Research Choices

AI is not a source just as Google is not a source just as a library is not a source—it is a place to look for sources. The MLA has allowed a citation format for the inclusion of information obtained via AI prompts, but you should not. AI finds content from numerous sources, and it is the job of a student to analyze where the information AI provides came from, why it is or is not valid, and synthesize the information into their work.

Here’s a case study demonstrating why:

When googling about the Quintin Tarantino film Pulp Fiction, Google’s AI, Gemini, will state (as of 2025) that actor John Travolta accidentally shot another actor on set, and that this accidental murder was left in the final film. AI is reporting this based on information it has gleaned from satirical posts on social media—in particular, film-based Reddit posts in which cinema fans knowingly post misinformation about movies and “wrong takes” to other cinema fans intended to know the information is false. In this specific instance, AI has essentially reported satire as fact while not understanding the purpose of the source it referenced.

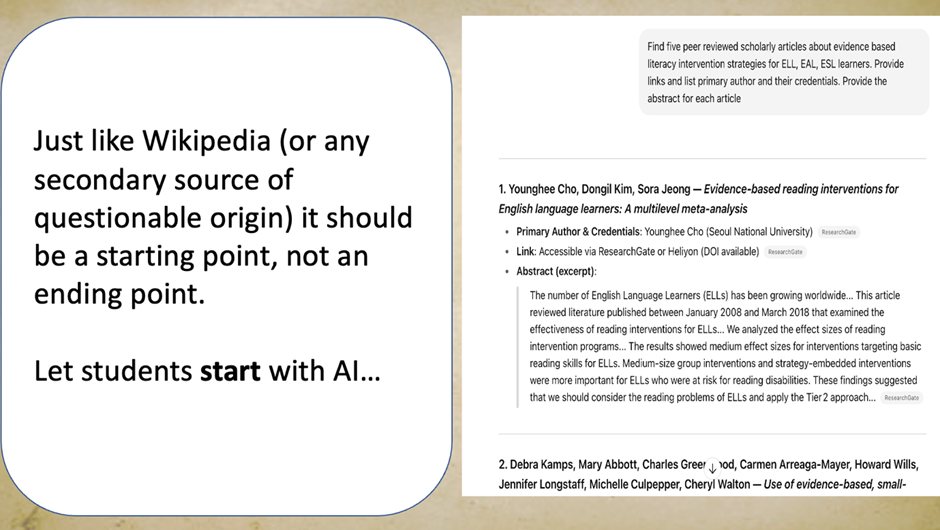

This does not make AI a bad tool to find information, it just makes it a bad end-point—but not a bad starting point. Students should have a plan for the type of information they want to look for. For example, they might prompt an AI one of the following two examples:

- Find five peer-reviewed scholarly articles about evidence-based literacy intervention strategies for ELL, EAL, ESL learners. Provide links and list primary author and their credentials. Provide the abstract for each article

- Find five sources about the time between World War I and WWII that discusses the causes of WW2. Include sources that mention the economic and cultural factors. Provide a one-paragraph summary of what the source talks about. Include a link to each source.

You’ll notice that in both cases, we ask not only for multiple sources and a summary of what each source says, but a specific link to the source. This is so that students can read for themselves the actual source (rather than trust the summary from AI). This is because AI can hallucinate information, or (as in the previous case of the incorrect Pulp Fiction film fact) misuses a source or misrepresents the true meaning of the data or text within it.

Students’ workflows for research should be as follows:

- Ask AI for multiple sources on a specific topic, a summary of each source, and a link to the source

- Check each source. In some cases, the source may be a secondary source. Students should try to find links in the source to go back to the most primary source they can both find and comprehend (a secondary source might be needed for overly complex primary sources or those which are in a language the student can’t read).

- Read the most primary source, take notes, and cite that source in their research.

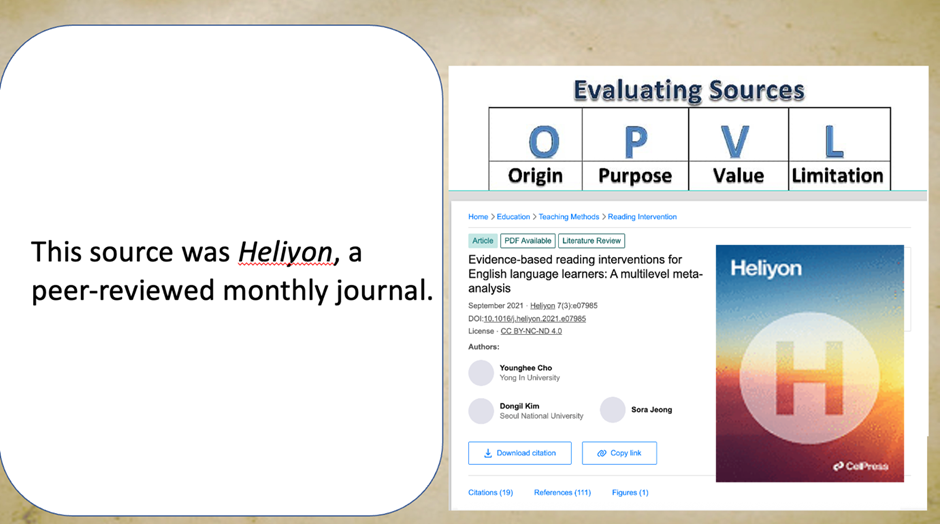

- Write an OPVL (Origin, Purpose, Value, Limitation) for each source justifying why they can trust the information from the source, and write a separate reflection comparing this information to the AI summary while reflecting on any misrepresentations the AI might have included.

Here is the process in action:

- Students ask for multiple sources on a topic

- Students follow the links from AI to the source AI found (In this case, it leads us from AI to ResearchGate):

- Students will follow this source provided by AI back to the most primary source they can find and understand. In this case, ResearchGate has pulled this article from Heliyon, a peer-reviewed monthly journal on educational research.

- Students will use information from this source for their papers. They will write an OPVL. In this case, an OPVL for Heliyon might look like this:

| ORIGIN | PURPOSE | VALUE | LIMITATION |

| Heliyon, a peer-reviewed monthly journal about education | To inform other researchers about the latest discoveries and findings related to education practices | It is peer-reviewed, so the findings are more likely to be true and unbiased. It is written by experts in the subject, and likely to be based on tested methods. | This source was from 2021, so it may be outdated. |

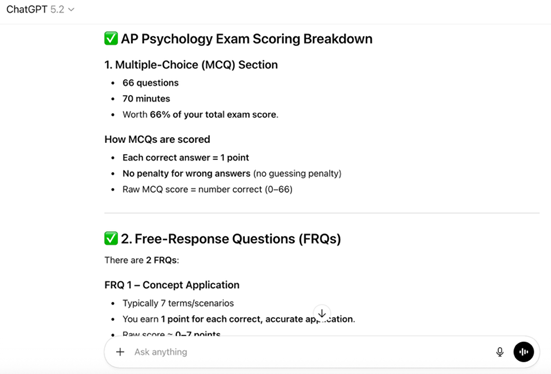

- Students have now justified using the information from the source. They will compare it to the information in the AI summary. Here is that process when asking AI how the AP Psychology test is scored. AI provided the following information:

When asking for a link and going directly to College Board, I read the actual scoring requirements. I would reflect as follows:

The AI in this case gave a correct link to College Board, but an incorrect breakdown of the scoring metrics. It states that there were 77 questions to do in 70 minutes, and that it was 66% of the score. The actual MCQ section requires 75 questions in 90 minutes, and it is 66.7% of the overall score.

The Fortson Framework in utilizing AI—Critical Thinking/Media Literacy

AI is not just a tool, but a new medium. Marshall McLuhan stated that “The Medium is the Message”; in other words, viewing content in one medium or another actively changes the interpretation of, and the way we perceive, the information. AI is increasingly watched in social media in the same manner as Reels (Instagram), movies, Shorts (YouTube), etc. Unfortunately, the medium of AI has a misnomer that causes one to, if not careful, view it as ultimately authoritative; unlike other mediums, people are more likely to assume it is true. Students often view AI as a fact-producing-machine rather than merely another source, as explained in the previous section. Just as we teach thematic analysis of novels, and teach media literacy and critical theory to students to analyze the motivation and purpose an author had in their narrative choices, so too should we with AI.

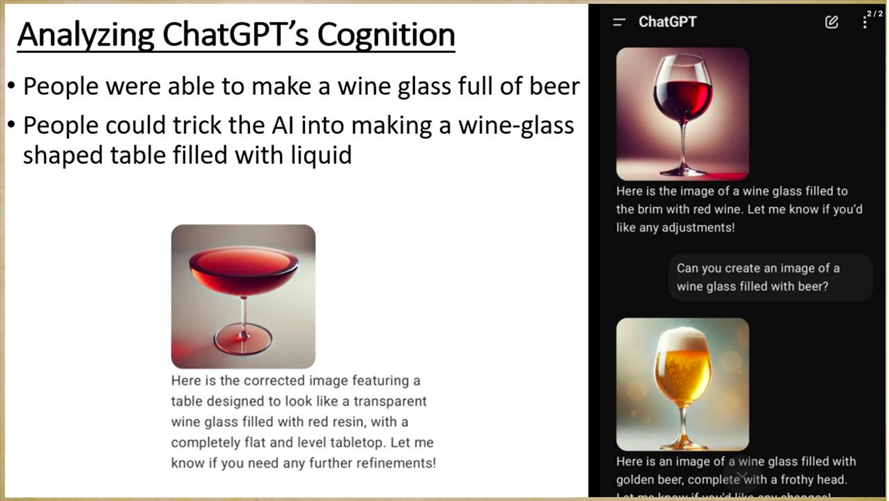

Consider the wine glass: at a certain point in time, AI could not make an image with a full glass of wine. It could make an image of a wine glass full of beer, and it could make a table in the shape of a wine glass filled to the top with red resin, but it could not make a glass of wine filled to the brim. Why?

It was taught on existing images, and that data set did not have any wine glasses filled to the brim, because that is not the typical manner of serving wine. AI has a cultural bias that made it unable to comprehend the request to fill it further. This is important, because it means that AI does not know anything beyond what it already knows (which is a mirror of what we know). This is an example of a cultural bias, as if the AI was telling you, “We don’t serve wine in this style here! What you are asking for does not exist!” But it does.

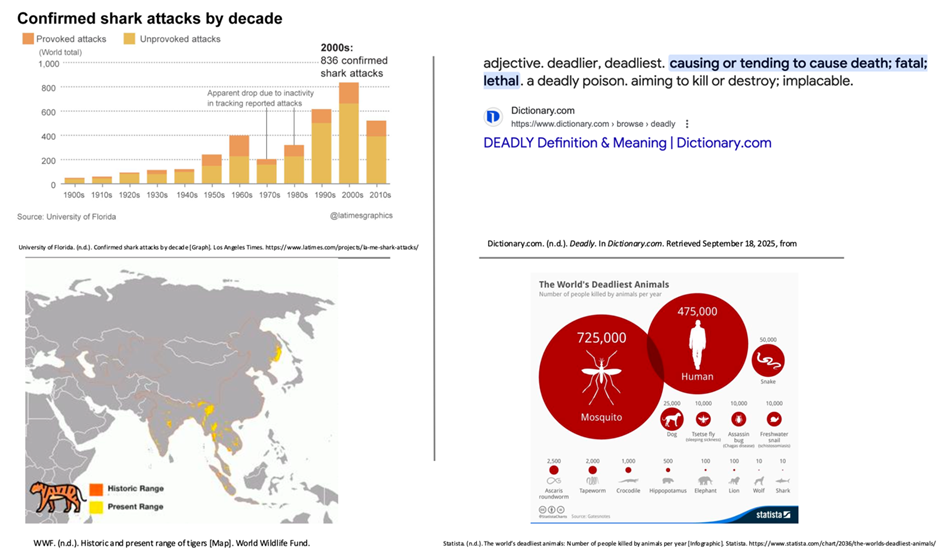

This error was eventually fixed, but the method of thinking that caused AI to answer in this manner likely was not. If I ask you to imagine the most dangerous animal in the world, what would you answer?

Many answer tiger, snake, lion—a few clever people answer “man”, and so far nobody has answered chicken. But it is actually the humble mosquito. If I add data to your training set: the definition of “deadly” does not mean strongest or sharpest claws, but responsible for the most deaths; the region upon which tigers live is limited, so few are likely to even see one; the statistics for shark attacks each decade are actually low—this is likely to make you answer “Mosquito” the next time I ask you the most deadly animal.

But you are still likely to default to the same biases in other ways. There is a famous riddle:

A father and son were in a car accident. The father was killed. The ambulance brought the son to the hospital. He needed immediate surgery. In the operating room, a doctor came in and looked at the boy and said, “I can’t operate on him—he is my son.” How is this possible?

I’ll answer this later to make a point, but for now let’s move on.

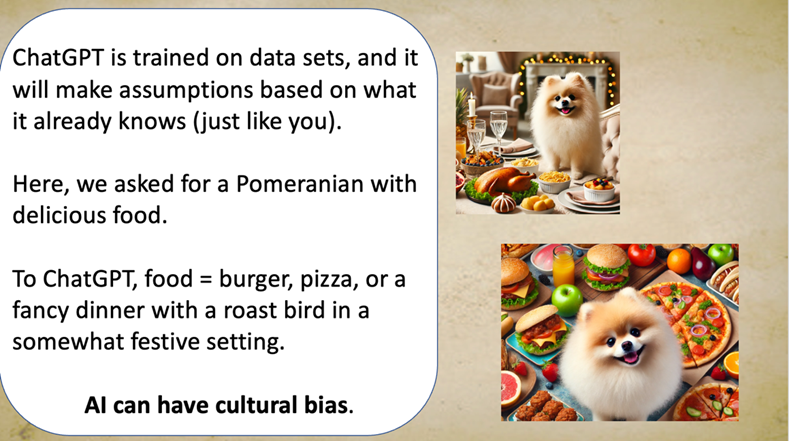

Much like the wine glass, if you ask AI to produce “a Pomeranian sitting with delicious food”, it will often default to certain foods (shown on the next page). Why was not Koshari, a dish popular around North Africa and the Levant, chosen? Or Chinese Hotpot? Because AI is a statistical model choosing what the statistically most likely response would be based on its training data. There are more Chinese people than Americans, and yet it did not choose the most numerous people’s food, so this suggests a certain “nationality” of AI models. Would asking it in a different language yield a different result?

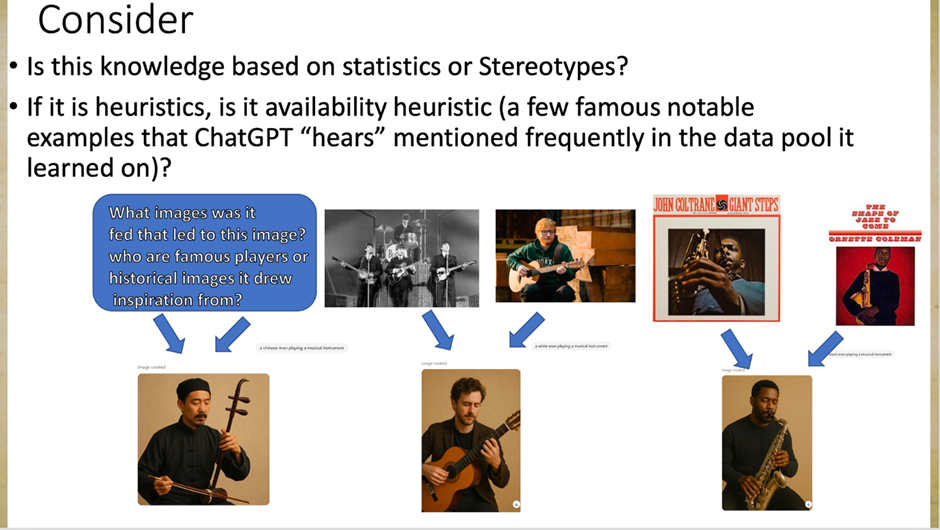

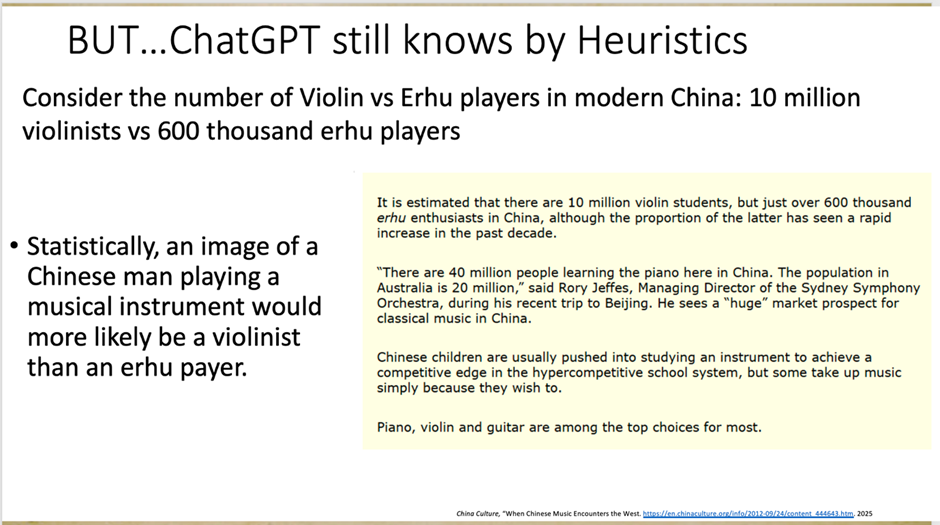

AI is susceptible to the same cognitive biases as humans. It suffers from availability and representative heuristics: it is more likely to think of something that is common in its own training data is also more likely “to be” in real life. For example, when I asked it to produce an image of a Chinese man playing an instrument, it produced a man in traditional Chinese clothing playing Erhu, a traditional Chinese instrument, rather than a violinist (which is statistically more likely). Similarly, “a black man playing an instrument” as a prompt was met with a man playing a saxophone, and “a white man playing an instrument” gave a choice between a violinist and a guitarist (after failing to choose an option in a timely manner, the AI selected guitar for me). [see the results below]

This means that the AI has a certain schema for terms, just as people do (think of schema as a “recipe” for a certain idea or concept: a schema for “bird” might be “a thing with feathers and a beak that flies and lays eggs”, and that it has a certain prototype, or a platonic ideal for each concept, that AI is more likely to draw on for its responses based on its current data. If you ask AI for an image of a bird, it is more likely to generate a sparrow than an ostrich, as that is closest to its prototype). For the image of the Chinese man playing Erhu, this is not morally wrong, and it could be useful (it defaulted to the most traditional instrument in its mind as opposed to most likely based on number, so if we wanted a representation of a platonic ideal for an instrument to represent Chinese culture, it could be a useful response). But this is something students should analyze: Why is it making certain prompts of images over others? What does it say about the data it was trained on?

This platonic ideal in concept is susceptible to error just as much as Plato’s definition of a man was proven erroneous by Diogenes. (An apocryphal tale: Plato, in response to being asked “what is a man”, answered: “A featherless biped”; Diogenes brought a plucked chicken in to the forum and exclaimed, “Behold, Plato’s man”, causing Plato to amend his definition to include “with broad flat nails”.)

What came first in AI errors: AI bias, or human bias in the data it uses to create its answers? It’s a chicken and egg problem that must be analyzed: Early AI translators would default all mention of female doctors to male doctors when translating texts from non-gendered languages like English into gendered languages like Turkish. Why?

Here is the answer to the previous riddle: the doctor was the boy’s mother.

A student should always analyze “behind the data” when considering the AI response.

This is where deep critical thinking can be applied by high-level students.

Students should ask AI for responses (image or text-based). With each response, a student should undertake three steps:

- Use AI prompts for a starting point, not an ending point. (This information will be analyzed not just for the content itself, but for the underlying reasons this specific content was produced, and not hypothetical/potential other content, in response to the given prompt).

- Require multiple sources from outside of AI to serve as a basis for their arguments about why AI content might be exhibiting a bias or not (e.g., statistical models, image representations as cultural relics, and others).

- Require a reflection in which students explain how their findings help to explain the AI’s generated output as a reflection of a specific cultural outlook (whether a specific nation, or time, or other cultural lens).

Ask yourself: Was your favorite food represented in the image of “delicious food”?

Ask yourself: Would you have been likely to catch that an AI translation was defaulting to a specific gender for certain jobs?

Ask yourself: What are the potential dangers of viewing AI passively, rather than reflectively?

A quote from Father John Culkin (often misattributed to Marshall McLuhan due to its inclusion in his famous work The Medium is the Message) is as follows: We shape the tools and thereafter the tools shape us.

It is imperative that students are critical and reflective of the responses produced by AI, and taught how to be so, or else we risk becoming just a button pusher and regurgitator—a biological tool, merely the organic step in a list—for a machine to produce content. If we are not the one thinking— remaining the intelligent and in control operator at all stages of the process—then what will the AI turn our students, and us, into?

Use AI. Don’t Become AI.

Formative Assessment example: Students should produce multiple images from different AI (preferably from different countries) and analyze the responses. Does the AI have certain choices that differ from a statistically likely response? What does this say about biases the AI may have? Are they exhibiting any specific cognitive biases (e.g. representative heuristic, availability heuristic?)

Summative Assessment example: Students will engage in a Socratic discussion about their results, justifying their arguments, continuing to prompt AI in the presence of a teacher and class to see if further images fit their current assumptions of bias. If they do not, they must explain why other images do or do not exhibit the bias they argued based. This is a strictly oral exam.

Evidence, Limitations, and Scope

A guided framework for proper AI use in education is helpful and needed.

In using these methods, my AP Literature students at a school in Egypt obtained passing scores 10% higher than the national average and a class passing rate of 82%.

Teachers at a school in Guangzhou, China through self-reported surveys on a 5-point Likert scale stated the following:

- 100% chose: AI usage should be strictly guided and regulated for student use to prevent plagiarism, loss of learning, or over-reliance on it

- 50% used AI with reflection, while 40% either did not require reflection or did not allow any AI use.

- 70% chose: AI can be a beneficial learning tool only if it includes a reflection component.

- 60% felt it had made their students’ overall performance in their subject worse because of current misuse, while 30% were not sure whether it had produced a negative effect on students.

The scope of this framework applies to Humanities courses (English and Social Studies), and may have use in arts. The scope of this framework has not been examined in STEM-related courses.

Get started with AI excercises that won’t replace student thinking and effort

AI Instructional Activities: Theory of Knowledge, Cognitive Psychology, English Lang& Comp

Play-Based Learning with AI Instructional Board Game: CAPTCHA’D: Prove You’re Not a Robot

References

Bulduk, A., Papa, N., & Svanberg, M. (June 22, 2023). But why? A study into why

upper secondary school students use ChatGPT. Karlstads University. https://www.diva-portal.org/smash/get/diva2:1773320/FULLTEXT01.pdf

Çerkini, B., Bajraktari, K., Çibukçiu, B., Ramadani, F., Zejnullahu, F., & Hajdini, L.

(2025). Artificial intelligence in higher education: Student perspectives on practices, challenges, and policies in a transitional context. Frontiers in Education, 10. https://doi.org/10.3389/feduc.2025.1700056

Chance, Z., Norton, M. I., Gino, F., & Ariely, D. (March 7, 2011). Temporal view of the

costs and benefits of self-deception. Proceedings of the National Academy of Sciences of the United States of America, 108(3), 2-3. https://doi.org/10.1073/pnas.1010658108

Chaudhry, I., Sarwary, S., Refae, G., & Chabchoub, H. (May, 2023). Time to Revisit Existing

Student’s Performance Evaluation Approach in Higher Education Sector in a New Era of ChatGPT — A Case Study. Cogent Education. 10. 10.1080/2331186X.2023.2210461.

Eaton, S. (January 22, 2022). The academic integrity technological arms race and its impact

on learning, teaching, and assessment. Canadian Journal of Learning and Technology. 48. 1-9. 10.21432/cjlt28388. 4.

Fortson, K. (2023). The effects of training on teacher ability to assess papers in the 21st

century: Can we learn to detect AI-written content like ChatGPT? doi:10.13140/RG.2.2.33466.57282/2

Klimova, B., & Pikhart, M. (2025). Exploring the effects of artificial intelligence on student

and academic well-being in higher education: A mini-review. Frontiers in Psychology, 16, 1498132. https://doi.org/10.3389/fpsyg.2025.1498132

Kosmyna, N., Hauptmann, E., Yuan, Y. T., Situ, J., Liao, X.-H., Beresnitzky, A. V.,

Braunstein, I., & Maes, P. (2025). Your brain on ChatGPT: Accumulation of cognitive debt when using an AI assistant for essay writing tasks. arXiv:2506.08872. https://doi.org/10.48550/arXiv.2506.08872

Lew, M. D., & Schmidt, H. G. (2011). Self-reflection and academic performance: Is there a

relationship? Advances in Health Sciences Education, 16(4), 529–545. https://doi.org/10.1007/s10459-011-9298-z

Newcomer, Alan. (2025). Why the AI Revolution in Education is Like Giving Kids Calculators, Not

Taking Away Math. Medium.com

TurnItIn. https://guides.turnitin.com/hc/en-us/articles/28457596598925-AI-writing-detection-

in-the-classic-report-view

Westfall, C. (January 28, 2023). Educators battle plagiarism as 89% of students admit to

using Open AI’s ChatGPT for homework. Forbes. https://www.forbes.com/sites/chriswestfall/2023/01/28/educators-battle-plagiarism-as-89-of-students-admit-to-using-open-ais-chatgpt-for-homework/?sh=5c73b19c750d

Vieriu, A. M., & Petrea, G. (2025). The impact of artificial intelligence (AI) on students’

academic development. Education Sciences, 15(3), 343. https://doi.org/10.3390/educsci15030343